Downloading Azure Media Services Videos With FFMPEG

I encountered a very unique challenge today - I needed to cut a part of a video hosted online with Azure Media Services for reference. The video in question is Into Focus, the “show within a show,” that aired at Microsoft’s Ignite conference earlier this week.

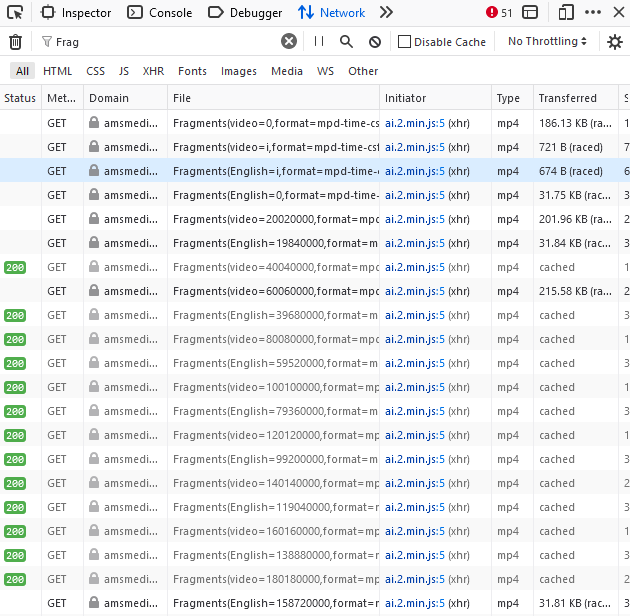

Now, ordinarily, and because I work on the team that produced those pages to begin with, I could reach out and ask where the source MP4 is located and get it that way. But I needed it that very moment (thank you, instant gratification). So what do I do, fire up the web browser inspector and hit play, in the hopes to get a https://foobar/video.mp4 request captured. But wait, what am I getting instead?

A bunch of fragments! Azure Media Services, the underlying service that underpins the player hosted on a docs.microsoft.com page, is not giving the full URL, but rather doing the smart thing and pre-buffering only the necessary parts (that is, within immediate play reach). The fragments are still MP4 chunks, but they can’t be played standalone if you copy and paste the URL - they need to be assembled together.

This is all fine and dandy, but I still need to cut a part of the whole video for my project. Let’s dig more! When Azure Media Services first starts playing the video, it downloads a manifest that contains all the necessary information for streaming. Again, a very calculated and smart thing, because the manifest contains different streaming preferences depending on the device, bandwidth, and allows packaging information about supported audio tracks.

If I look back at the video I am trying to get, the manifest request can be extracted from the browser’s Network inspector, and ends up being the following:

https://amsmediusw-ak.studios.ms/e62c1901-db57-413c-839a-7868389de4f1/STUDIO104.ism/manifest(format=mpd-time-csf)

That’s an intimidating URL with intimidating content:

<?xml version="1.0" encoding="utf-8"?>

<MPD mediaPresentationDuration="PT1H3M8.096S" minBufferTime="PT3S" profiles="urn:mpeg💨profile:isoff-live:2011" type="static" xmlns="urn:mpeg💨schema:mpd:2011" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance">

<Period>

<AdaptationSet bitstreamSwitching="false" codecs="avc1.4d4028" contentType="video" group="1" id="1" maxHeight="1080" maxWidth="1920" mimeType="video/mp4" profiles="ccff" segmentAlignment="true" startWithSAP="1">

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(video=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(video=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="20020000" r="1891"/>

<S d="2669333"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation bandwidth="6000000" height="1080" id="1_V_video_1" width="1920"/>

<Representation bandwidth="3000000" codecs="avc1.4d401f" height="720" id="1_V_video_2" width="1280"/>

<Representation bandwidth="2000000" codecs="avc1.4d401f" height="540" id="1_V_video_3" width="960"/>

<Representation bandwidth="1300000" codecs="avc1.4d401e" height="360" id="1_V_video_4" width="640"/>

<Representation bandwidth="800000" codecs="avc1.4d4015" height="288" id="1_V_video_5" width="512"/>

<Representation bandwidth="500000" codecs="avc1.4d400d" height="216" id="1_V_video_6" width="384"/>

<Representation bandwidth="350000" codecs="avc1.4d400d" height="180" id="1_V_video_7" width="320"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="2" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>English</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(English=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(English=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_English_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="3" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>Audio Description</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(Audio Description=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(Audio Description=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_Audio Description_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="4" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>French</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(French=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(French=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_French_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="5" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>German</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(German=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(German=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_German_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="6" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>Japanese</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(Japanese=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(Japanese=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_Japanese_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="7" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>Mandarin</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(Mandarin=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(Mandarin=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_Mandarin_1"/>

</AdaptationSet>

<AdaptationSet bitstreamSwitching="false" codecs="mp4a.40.2" contentType="audio" group="5" id="8" lang="und" mimeType="audio/mp4" profiles="ccff" segmentAlignment="true">

<Label>Spanish</Label>

<SegmentTemplate initialization="QualityLevels($Bandwidth$)/Fragments(Spanish=i,format=mpd-time-csf)" media="QualityLevels($Bandwidth$)/Fragments(Spanish=$Time$,format=mpd-time-csf)" timescale="10000000">

<SegmentTimeline>

<S d="19840000" r="1908"/>

<S d="6400000"/>

</SegmentTimeline>

</SegmentTemplate>

<Representation audioSamplingRate="48000" bandwidth="128000" id="5_A_Spanish_1"/>

</AdaptationSet>

</Period>

</MPD>

Worry not, though - this is all part of the flexibility of Azure Media Services. The file is a descriptor of the content, and the embedded player is then responsible for grabbing the right chunks depending on the characteristics of the system where the content is played. Notice that there are different adaptation sets for each audio track that is available (and there are a few languages supported, such as Spanish and Japanese).

This doesn’t help me, though. I still don’t have access to any direct video link that would allow me to download the file directly, and I don’t want to write a custom script to re-assemble the MP4 chunks. After a bit of digging, I found out that I can actually use ffmpeg, an open-source video processing tool, to do this for me. To do that, however, I will need a M3U file. But how do I get one? Well, Azure Media Services does this for me already, as they offer dynamic manifests. I just need to tweak the URL to it, as such:

https://amsmediusw-ak.studios.ms/e62c1901-db57-413c-839a-7868389de4f1/STUDIO104.ism/manifest(format=m3u8-aapl-v3)

The only thing that changed is the (format=m3u8-aapl-v3) at the end - I am using the Apple HTTP Live Streaming V3 format. And the content of that manifest is now a little less intimidating!

#EXTM3U

#EXT-X-VERSION:3

#EXT-X-STREAM-INF:BANDWIDTH=504836,RESOLUTION=320x180,CODECS="avc1.4d400d,mp4a.40.2"

QualityLevels(350000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=658136,RESOLUTION=384x216,CODECS="avc1.4d400d,mp4a.40.2"

QualityLevels(500000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=964736,RESOLUTION=512x288,CODECS="avc1.4d4015,mp4a.40.2"

QualityLevels(800000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=1475736,RESOLUTION=640x360,CODECS="avc1.4d401e,mp4a.40.2"

QualityLevels(1300000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=2191136,RESOLUTION=960x540,CODECS="avc1.4d401f,mp4a.40.2"

QualityLevels(2000000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=3213136,RESOLUTION=1280x720,CODECS="avc1.4d401f,mp4a.40.2"

QualityLevels(3000000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=6279136,RESOLUTION=1920x1080,CODECS="avc1.4d4028,mp4a.40.2"

QualityLevels(6000000)/Manifest(video,format=m3u8-aapl-v3,audiotrack=Spanish)

#EXT-X-STREAM-INF:BANDWIDTH=138976,CODECS="mp4a.40.2"

QualityLevels(128000)/Manifest(Spanish,format=m3u8-aapl-v3)

There’s a catch here, though. If you look at the contents of the M3U, you might also notice the audiotrack=Spanish as the prefix. Because our video has multiple audio tracks, it seems like the generated M3U manifest defaults to the last node in the original one. Using this particular variant will result in me downloading a 2GB+ file that has a Spanish audio track, while I needed the English one.

To fix this, all I need to do is append an audiotrack=English argument inside the parentheses, as such (this is called filter composition):

https://amsmediusw-ak.studios.ms/e62c1901-db57-413c-839a-7868389de4f1/STUDIO104.ism/manifest(format=m3u8-aapl-v3,audiotrack=English)

This should do it! Still no URL, but I will defer to ffmpeg to know what it’s doing. To download and re-assemble a streamed video, I can call it from the terminal:

ffmpeg.exe -i "https://amsmediusw-ak.studios.ms/e62c1901-db57-413c-839a-7868389de4f1/STUDIO104.ism/manifest(format=m3u8-aapl-v3,audiotrack=English)" -c copy D:\experiments\video.mp4

In a couple of minutes, I had a working video! Now that I think about it, the initial instant gratification assumption was probably flawed and I could’ve just asked someone for the file, but that just means I don’t get to figure out this problem with ffmpeg, and let’s be real - most problems can probably be solved by ffmpeg.