Basics Of Reddit Sentiment Analysis

One of the things that I am really curious about is analysis of publicly-available data. There is a lot of useful context that can shed a lot of light on some important happenings and trends. I’ve started with one of the resources that has a lot of rich, user-created content: Reddit. I also wanted to focus on a local implementation, that does not require me to sign up for a big data service, such as BigQuery.

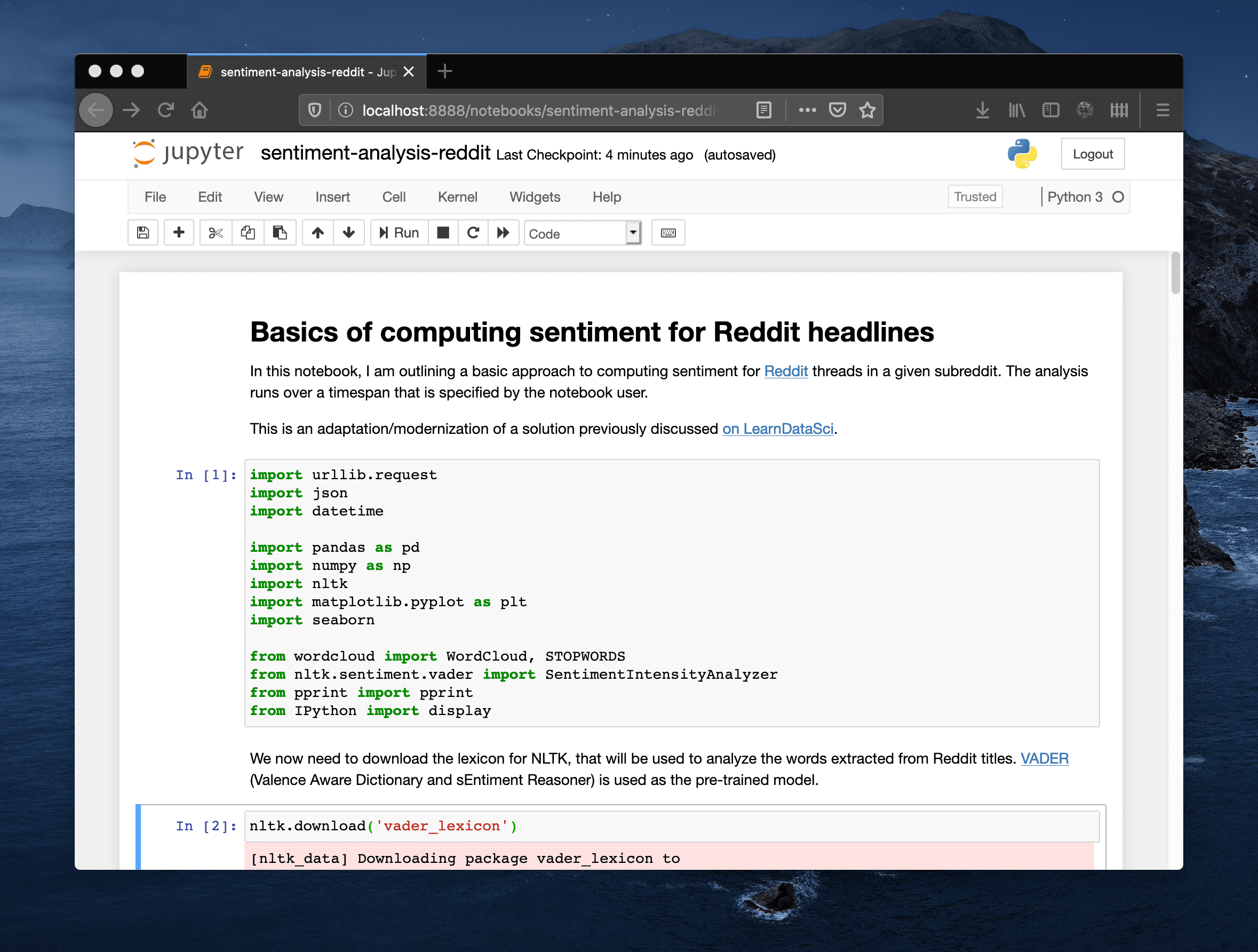

You can check out the Jupyter notebook that addresses the fundamental scenario in my GitHub repository.

To get started, make sure that you have Python 3 installed, along with the required packages - you can execute python3 -m pip install -r requirements.txt to get the environment set up and ready.

The notebook is designed to be flexible in terms of subreddits that are being analyzed. Similarly, I’ve made sure that I am able to pull posts from the past - something that is not possible with the current implementation of Python-based Reddit wrappers (i.e. PRAW).

I will make sure to add some additional enhancements as I go, since there is still a need to pull in more data than the default API exposes. There is also an issue with the fact that the sentiment model is not trained on developer content, so focusing on subreddits that are technical sometimes yields sub-optimal outputs.